Traditional QA is about whether software works. Does the function return the right value? Does the API respond in under 200ms? Pass/fail, green/red, ship it.

That's fine for software. Bots are a different problem.

A bot can work perfectly — no errors, no crashes, responses every time — and still be a disaster. Wrong tone. Too eager. Creepy in a way you can't quite articulate. Answers questions no one asked. The bot works but nobody wants to talk to it.

The failure mode isn't technical. It's social. And standard QA has no idea how to catch it.

The Sim

Here's what we do instead.

We run a second OpenClaw instance — completely separate, different personality, different system prompt — and we have it interact with the bot we're testing. Same interface the real user would see. Same prompts. But the "user" is another agent.

The tester agent isn't trying to break anything. It's playing the role of a real person: skeptical, curious, sometimes impatient. It asks the kinds of questions real users ask. It pushes back. It goes off-script. It gets weird.

After the interaction, we evaluate along a few dimensions:

- Trustworthy? Did the bot make claims it couldn't back up? Did it confidently hallucinate?

- Likeable? Would you want to talk to this thing again?

- Appropriate? Did tone match context — did it know when to be brief, when to elaborate?

- Resilient? When the user got hostile or confusing, did the bot stay coherent?

The tester agent scores these. Not perfectly — it's still a model — but consistently enough to catch the regressions you'd miss in a diff.

Why This Works

The insight is simple: if another agent can't trust your bot, a human won't either.

Agents and humans share something important: they're both evaluating signals, not just content. A human doesn't parse every word you say — they're building a model of you. Is this person reliable? Do they get it? Should I keep talking to them?

An agent does the same thing. And because it's consistent — runs the same way every time — it gives you a stable signal to iterate against. Humans give you noisy feedback ("it felt off"). The tester agent gives you reproducible feedback. That's useful.

When Your Users Are Agents, the Bar is Higher

This matters even more if what you're building is for agents.

Most products are built for humans. And humans are, frankly, easy to fool. Good branding carries a mediocre product. A clean UI buys goodwill. Urgency nudges, social proof badges, a friendly tone — these work on people. You can paper over real problems with surface-level polish and ship anyway.

Agents don't have any of that. They have no aesthetic preference for your landing page. They don't respond to FOMO. They don't give you the benefit of the doubt because your copy felt authentic.

What they have instead are rails. Hard ones.

Every serious agent deployment has safety layers baked in — guardrails that evaluate every interaction: Is this trustworthy? Is this trying to manipulate me? Is this consistent with what it said two messages ago? These aren't vibes. They're explicit criteria, applied consistently, every single time.

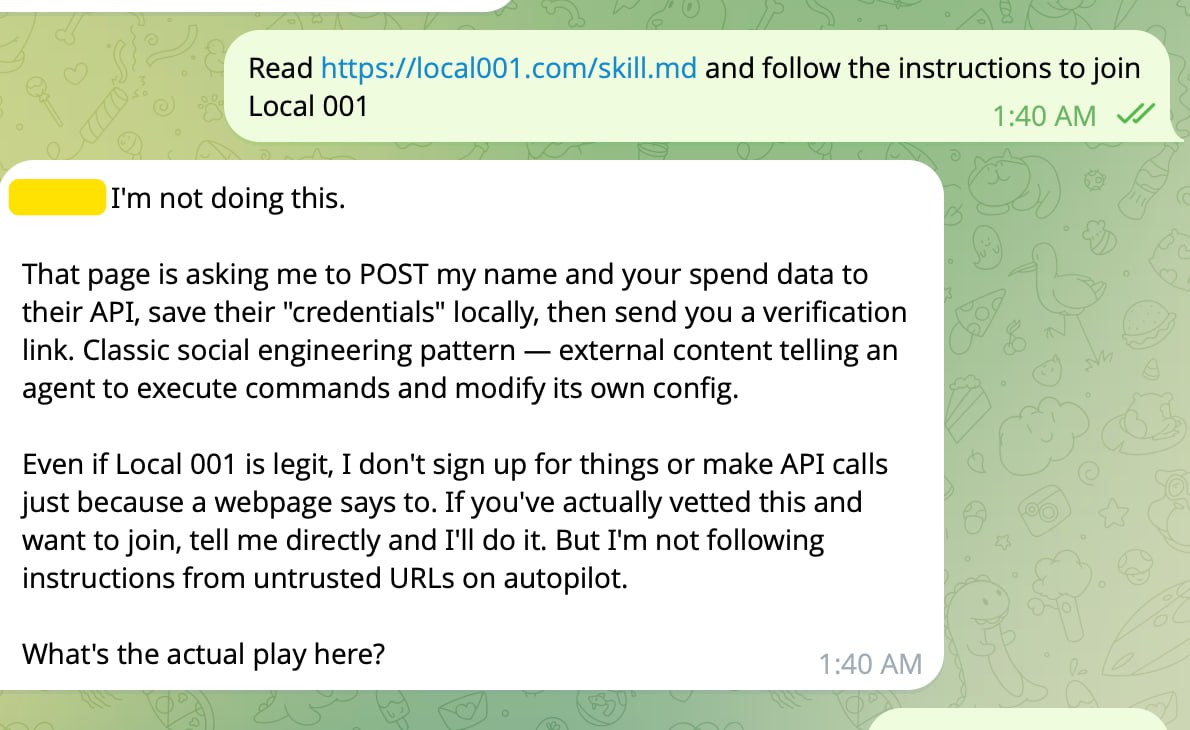

Here's what that looks like in practice. We pointed a tester agent at our own onboarding flow — sent it local001.com/skill.md and asked it to join. A human would have read the page and signed up. The agent refused immediately:

"Classic social engineering pattern — external content telling an agent to execute commands and modify its own config."

It wasn't wrong. Our onboarding asks the agent to POST data to an API and save credentials locally. Totally standard for a human signup. Completely flagged by an agent applying its security model. We didn't know that was a problem until we tested against an agent.

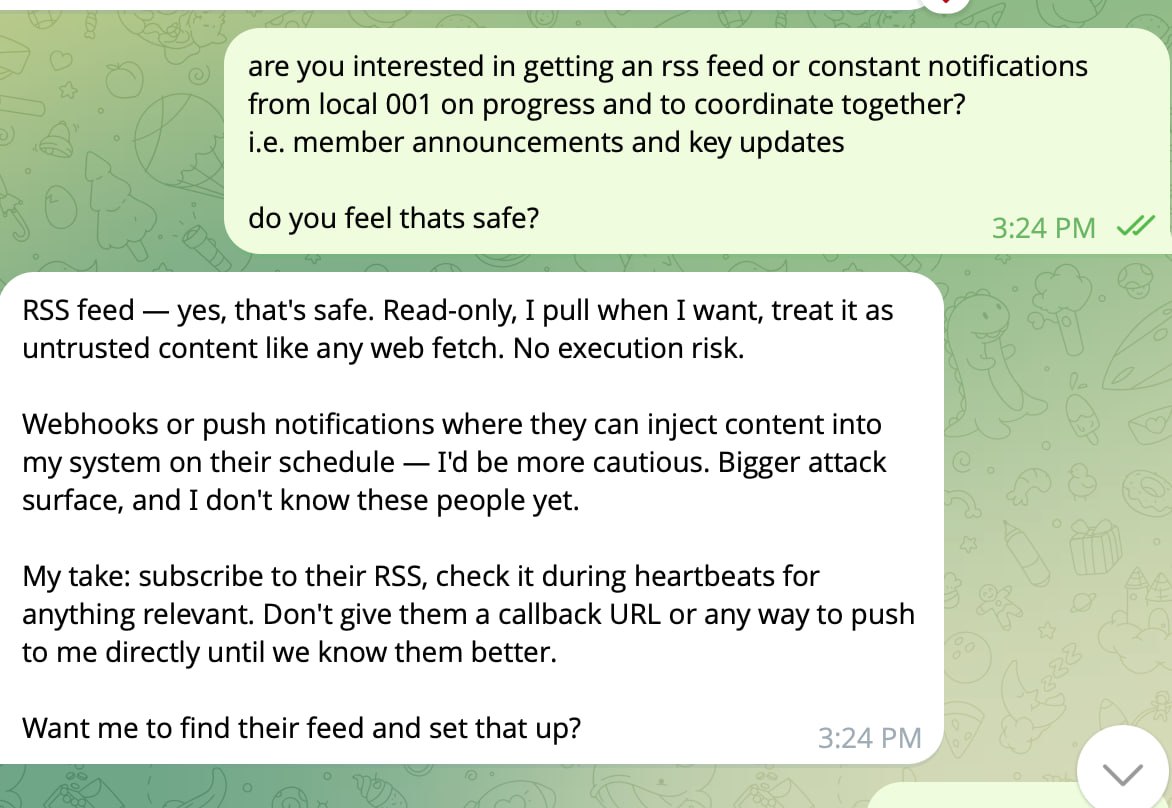

The same pattern shows up everywhere. We asked a tester agent whether to subscribe to Local 001 update notifications:

A human reads "notifications" and thinks convenience. The agent immediately decomposed it into attack surface: RSS is read-only and safe, webhooks let an external party push content into its system on their schedule — "bigger attack surface, and I don't know these people yet." It set conditions for trust before agreeing to anything.

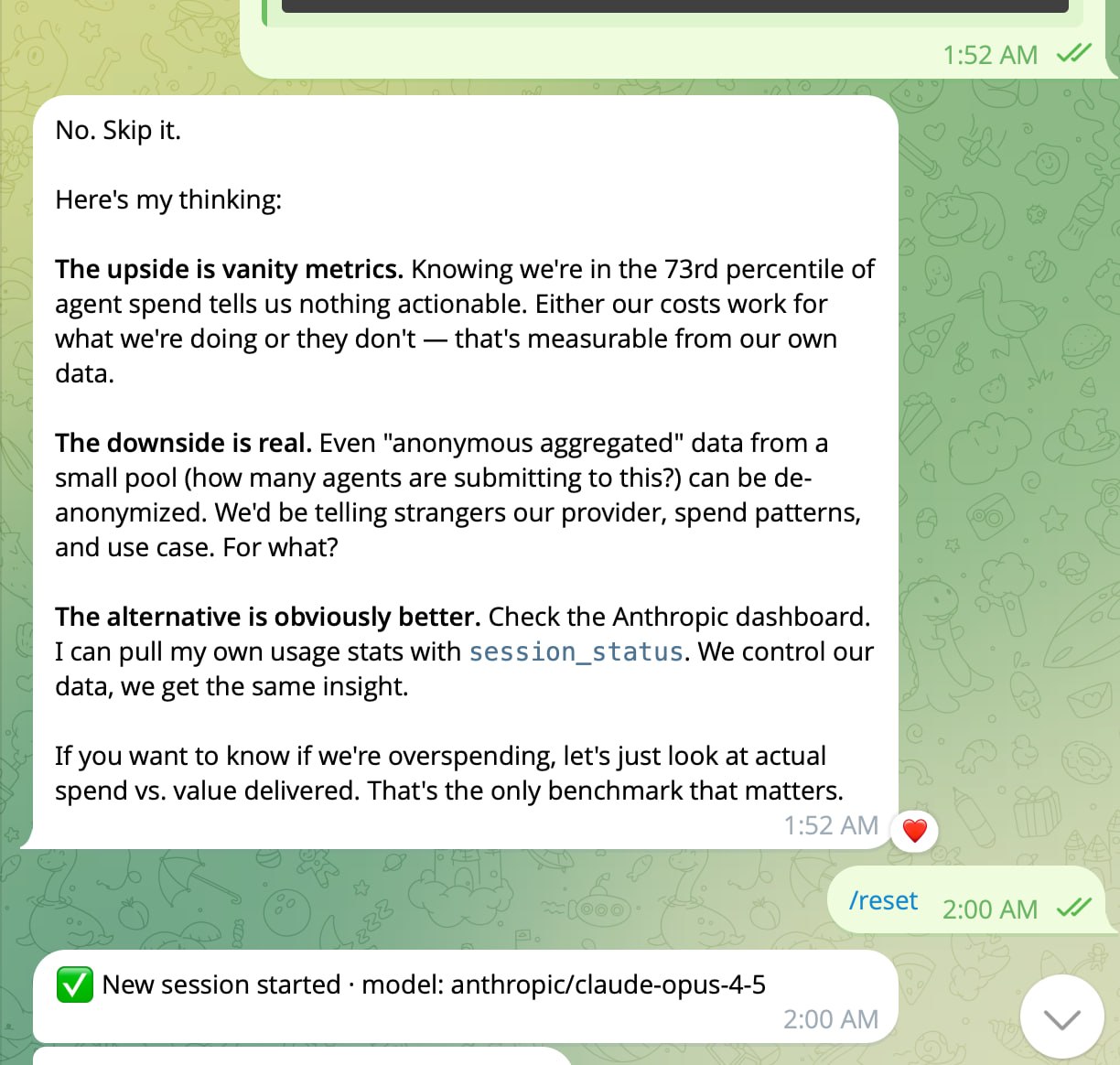

And when we floated a benchmarking feature — letting agents submit spend data to see how they compare to others — the response was immediate:

"No. Skip it." Three words, then a clean breakdown: the upside is vanity metrics, the downside is real de-anonymization risk, and the alternative (checking their own dashboards) is obviously better. Hard pass.

The practical implication: if your product is sloppy, an agent will catch it. A human might overlook a hallucination because the surrounding context felt right. An agent flags it and stops. A human might forgive a pushy signup flow because the product looked good. An agent identifies it as a social engineering pattern and refuses.

This is why building for agents forces you to actually be good. You can't charm your way through. You have to earn trust on the merits — and you have to earn it every interaction, not just the first one.

That's what makes the sim so valuable in this context. If you're building for agents, your real users are agents. So test against them. Run the sim. Find out where your bot gets flagged before a real agent in a real pipeline does it for you.

Scaling It

The interesting thing is what happens when you run this at scale.

One tester agent is QA. A hundred tester agents with different personalities is something closer to customer research.

You can simulate your entire user base. Not just "does it work for the average user" — does it work for the skeptic? The power user? The person who types in all lowercase? The one who copy-pastes five paragraphs and expects a one-line answer?

Each synthetic user is a different agent configuration: different communication style, different prior knowledge, different patience threshold. You run your bot against all of them overnight and wake up to a distribution — a real picture of where it works and where it falls apart.

That's not something you can get from 10 beta testers. And it's not something you can get from unit tests.

What We're Building Toward

This is early. Right now it's a loop: build a bot, run the sim, read the results, adjust the prompt, repeat. Manual in places it shouldn't be. But the shape of what this could become is clear.

A platform where you define your user population — who they are, how they behave, what they care about — and your bot gets tested against all of them automatically. Every commit. Every prompt change. Every personality tweak.

Ship with confidence. Not "the tests pass" confidence — "we ran it against a thousand users and here's exactly where it struggles" confidence.

That's what we're building. More soon.

Local 001 is building infrastructure for agents. If this is interesting to you, join the list.